PRODUCTS

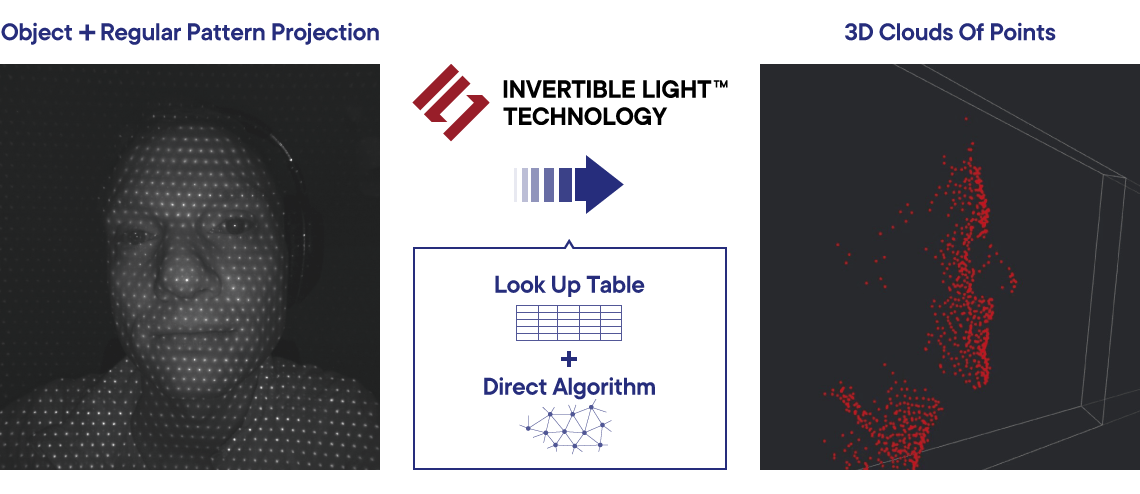

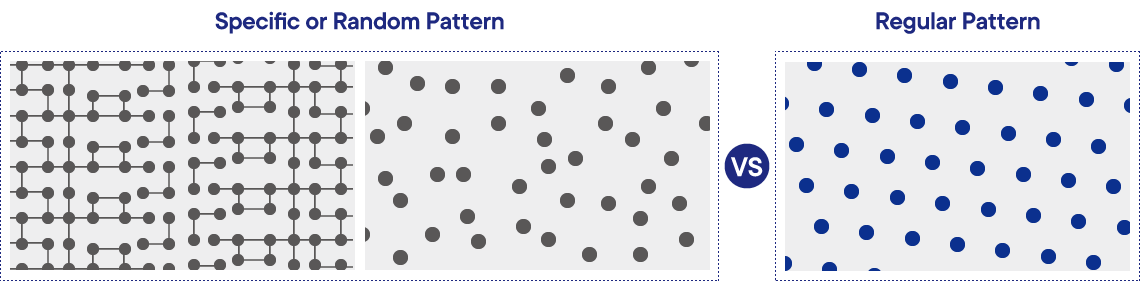

The basic principle of Magik Eye’s 3D depth sensors is based on the triangulation method using an infrared laser and a CMOS image sensor. However, using a unique algorithm developed by Magik Eye 3D, point cloud data can be acquired at high speeds and with very low latency using simple hardware configuration.

Magik Eye Developer Kit with the ILT001 sensor, a.k.a. DK-ILT001, is a 3D sensor designed to connect to a Raspberry Pi single-board computer. It is aimed at researchers in companies and laboratories at universities who want to easily evaluate Magik Eye technology and to explore depth sensors' applications in various technical fields.

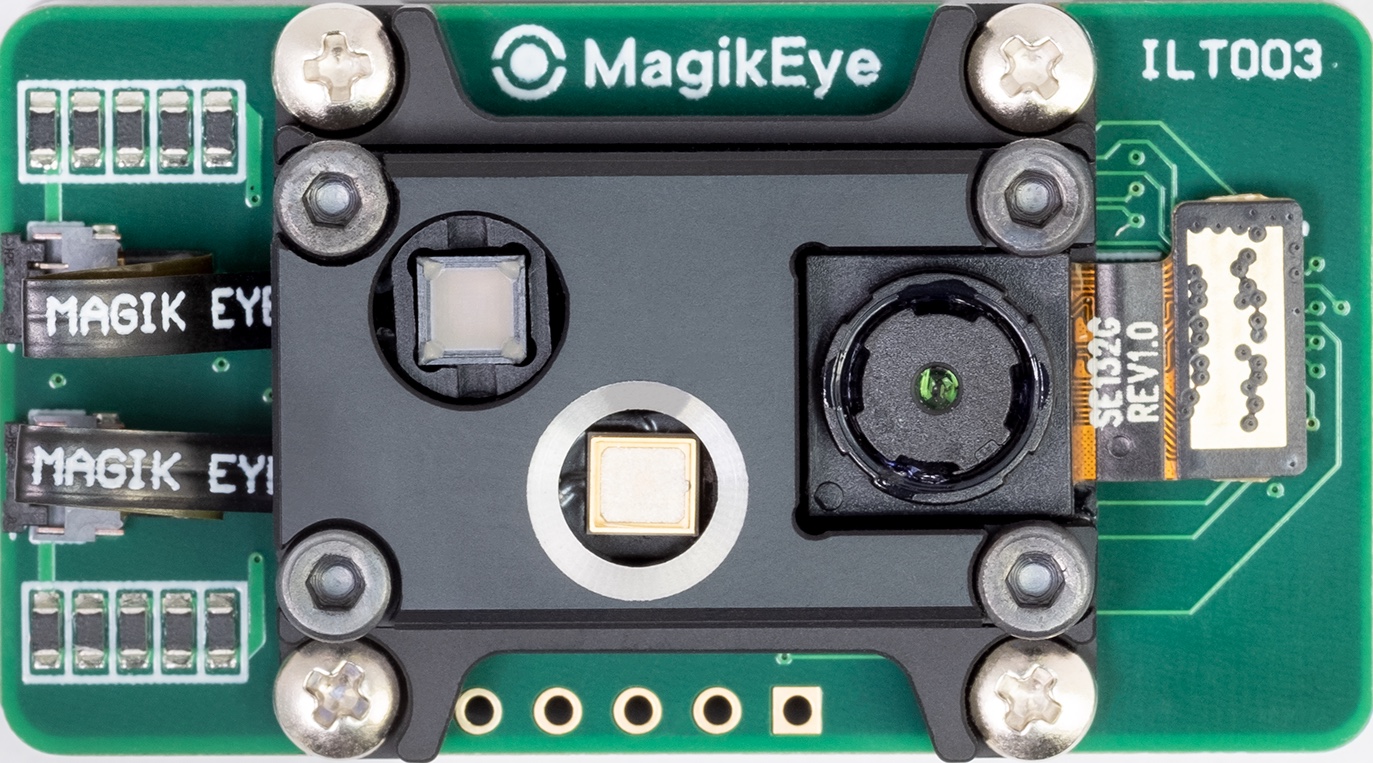

The hardware of ILT001 has laser projector, CMOS image sensor and electrical circuits for connecting Raspberry Pi.

A Raspberry Pi and a connected DK-ILT001 module are converted into a network-connectable 3D sensor by installing Magik Eye firmware and calibration data.